Special Issue Announcement: DeepILIA Team Invites Submissions for "Multimodal AI" in CMES 📝

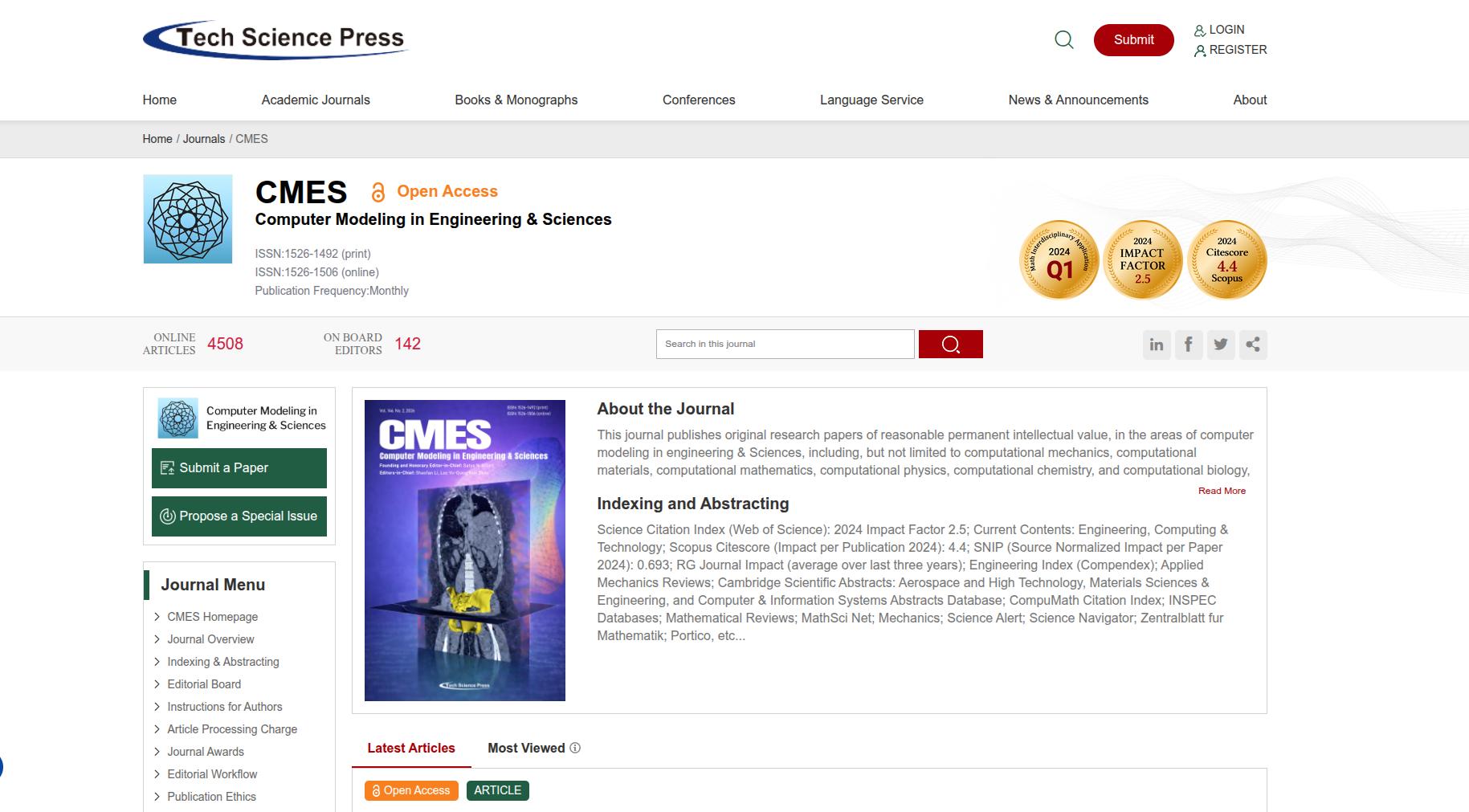

We are excited to announce that the DeepILIA team is leading a dedicated Special Issue in the prestigious journal Computer Modeling in Engineering & Sciences (CMES). Entitled "Explainable Multimodal AI: Interpretability, Generative Modeling, and Trustworthy Intelligent Systems" this initiative aims to push the boundaries of how AI systems perceive and interact with our complex world.

In an era where data is no longer limited to a single format, the shift toward Multimodal Learning is essential. While traditional AI often struggles with the ambiguity of human communication, multimodal systems integrate text, images, video, and audio to provide a more holistic and context-aware understanding. Our goal with this Special Issue is to showcase research that moves these technologies from theoretical models to robust, real-world solutions.

We are specifically looking for original research and high-quality review papers that address:

Multimodal Fusion Techniques: Innovative ways to combine diverse data streams. 🧩

Cross-modal Representation: How AI can "translate" understanding between different types of data. 🔄

Explainable & Trustworthy AI: Ensuring these complex systems remain transparent, fair, and reliable ✅

Practical Applications: Impactful use cases in healthcare, autonomous driving, and social media analysis. 🏥🚗

As members of the editorial team for this issue, we invite our colleagues, fellow researchers, and the global scientific community to submit their latest findings. This is a unique opportunity to contribute to a collection that will shape the standards for the next generation of intelligent systems.

The submission process is now open through the CMES online system. We look forward to reviewing your innovative work and collaborating to bridge the gap between academic research and practical, unbiased AI implementation.

Let’s build the future of multimodal intelligence together! 🤝✨